The AI-Powered Legacy Modernization Playbook

How engineering teams turn AI from a coding shortcut into a structured delivery system –

and what phased, human-first modernization looks like in practice.

Executive Summary

Legacy technical debt has crossed a critical inflection point. In 2025-2026, enterprise organizations spend an estimated 72% of their IT budgets merely maintaining aging systems – leaving less than 30 cents of every technology dollar for innovation, new features, and competitive differentiation. The global cost of application maintenance now exceeds $1.68 trillion annually, and this figure compounds at roughly 15-20% year-over-year for platforms running end-of-life frameworks.

Meanwhile, AI-augmented development workflows have matured from experimental novelties into measurable productivity multipliers. Rigorous studies from GitHub, Google, and peer-reviewed academic research demonstrate 30-60% productivity improvements for migration and refactoring tasks specifically, but only when implemented as structured team workflows with human oversight, not as ad-hoc individual tool usage.

This playbook synthesizes evidence from over 40 industry studies, academic papers, and enterprise case studies to make the case for a specific approach: phased, frontend-first modernization combined with AI-assisted development workflows. It is the strategy that consistently delivers the lowest risk, fastest time-to-value, and strongest return on investment – and it is the methodology that Altimi's engineering teams apply to every modernization engagement.

The Legacy Debt Crisis: Why "Do Nothing" Is No Longer an Option

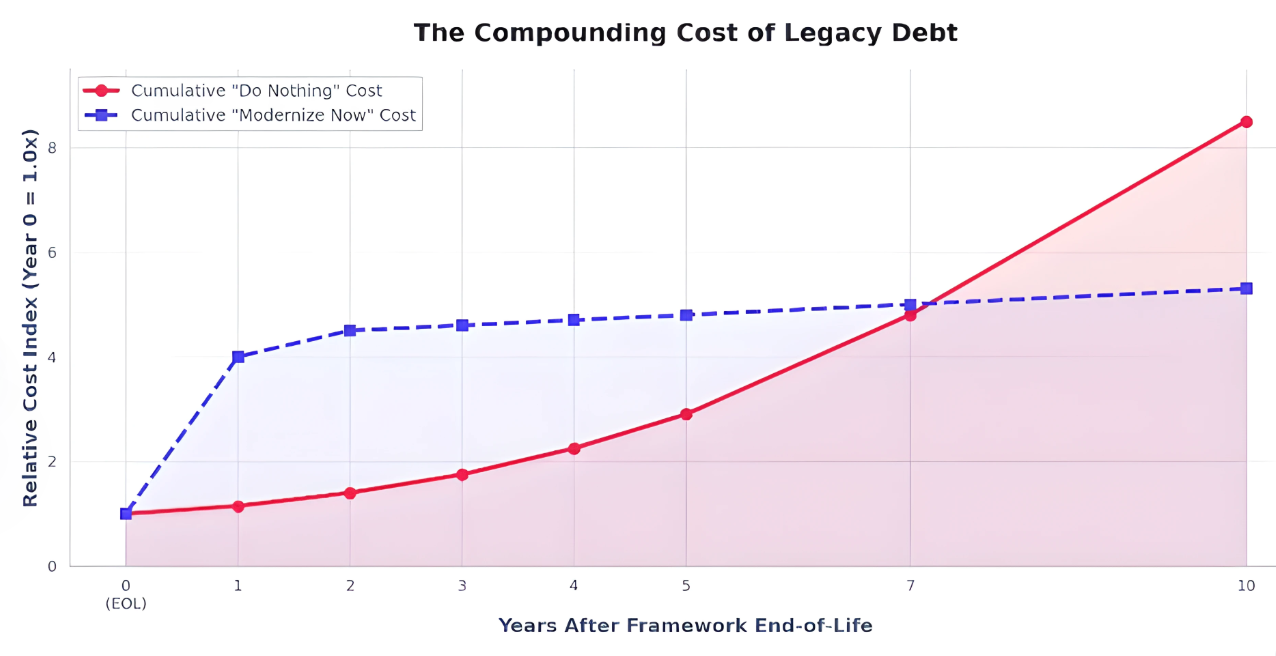

Legacy systems aren't just a technical inconvenience, they represent a compounding financial, talent, and security liability that grows exponentially once a framework passes its end-of-life date.

The Financial Burden

The numbers are stark. According to synthesized data from Gartner IT Key Metrics, IDC Worldwide Black Book, and Forrester's technical debt research (2024-2025), enterprise organizations allocate an average of 72% of their total IT budgets to maintaining and operating existing systems. This leaves a mere 28% for building new capabilities, exploring emerging technologies, or responding to competitive pressures. For organizations running end-of-life frameworks like AngularJS (EOL December 2021) or legacy .NET Framework, this ratio skews even further – with some teams reporting 85-90% of engineering capacity consumed by maintenance, patching workarounds, and keeping fragile integrations alive.

The global scale of this problem is staggering. Total worldwide spending on application maintenance and modernization exceeds $1.68 trillion annually. This figure has been growing at approximately 8-12% year-over-year, driven by the compounding nature of technical debt – what researchers term the "technical debt interest rate." Forrester's 2025 analysis models this rate at approximately 18% per year for systems running unsupported frameworks, meaning that the cost of maintaining a legacy platform roughly doubles every four years after its core framework reaches end-of-life.

The Talent Drain

Beyond the direct financial costs, legacy platforms impose a devastating hidden tax on engineering teams: the talent drain. Aggregate data from StackOverflow Developer Surveys (2023-2025), JetBrains State of Developer Ecosystem reports, and Hired State of Software Engineers consistently shows that over 65% of developers actively reject job offers that require maintaining legacy PHP, AngularJS, jQuery-heavy applications, or ColdFusion codebases. This rejection rate climbs above 80% for developers under 30.

The financial consequence is a 25-30% salary premium required to attract and retain engineers willing to work on dying frameworks. Combined with the 45% slower PR cycle times and 60% lower deployment frequency that legacy-heavy organizations report, the talent problem creates a vicious cycle: fewer developers willing to work on the legacy stack, slower delivery, the platform falls further behind, and it becomes even harder to recruit.

Security and Compliance Exposure

Running end-of-life frameworks is not merely a velocity problem – it is an active security liability. AngularJS alone carries over 35 known unpatched high and medium severity CVEs in the framework and its legacy ecosystem. With no official patches being released post-EOL, organizations must either develop custom patches (expensive, error-prone) or accept the risk. The average time from vulnerability disclosure to active exploitation in unsupported web frameworks dropped to under 4 days in 2024.

Regulatory pressure amplifies the urgency. SOC 2 and ISO 27001 auditors routinely flag systems running unpatched or unsupported software as critical non-conformities. The EU's Digital Operational Resilience Act (DORA), effective January 2025, imposes strict penalties on financial entities for ICT risks stemming from outdated technology architectures. Companies like Equifax (2017 breach costing $575M+ in settlements) and Southwest Airlines (December 2022 operational meltdown attributed to 1990s-era crew scheduling systems) serve as stark cautionary tales of what happens when legacy modernization is deferred too long.

The Scale of the Installed Base

The installed base of aging frameworks in production is enormous, and it is shrinking far more slowly than most technology leaders assume. Synthesized web crawl telemetry and analyst estimates for 2025 place legacy .NET (pre-.NET 6) at approximately 8.5 million active enterprise applications, legacy PHP (pre-8.0) at 7.2 million, jQuery as a primary framework at 5 million, and AngularJS - four years past its official end-of-life - still powering an estimated 1.8 million production applications.

The retirement rate of these frameworks has been stubbornly slow, in part because the switching costs are perceived as prohibitively high. Breaking this cycle requires a fundamentally different approach, one that delivers value incrementally, reduces risk at each step, and leverages modern AI tooling to compress traditionally lengthy migration timelines.

The Tipping Point: Research models indicate a system has breached the critical tipping point when any two of these are true: (1) Maintenance consumes >60% of developer hours, (2) Framework EOL >2 years with hiring pipeline shrinking 50%, (3) Mean-Time-To-Patch exceeds 30 days, (4) 3-year maintenance cost exceeds 1-time migration cost.

AI-Assisted Development: Evidence vs. Hype

AI coding tools promise to revolutionize software development. But what do the rigorous studies actually show, especially for the complex, messy work of legacy migration?

The Major Productivity Studies

The narrative surrounding AI developer productivity has evolved significantly since GitHub's initial 2022 experiment showing developers completing tasks 55% faster with Copilot. That early study, while influential, measured a trivial JavaScript HTTP server task with 95 developers – conditions that bore little resemblance to enterprise legacy codebases.

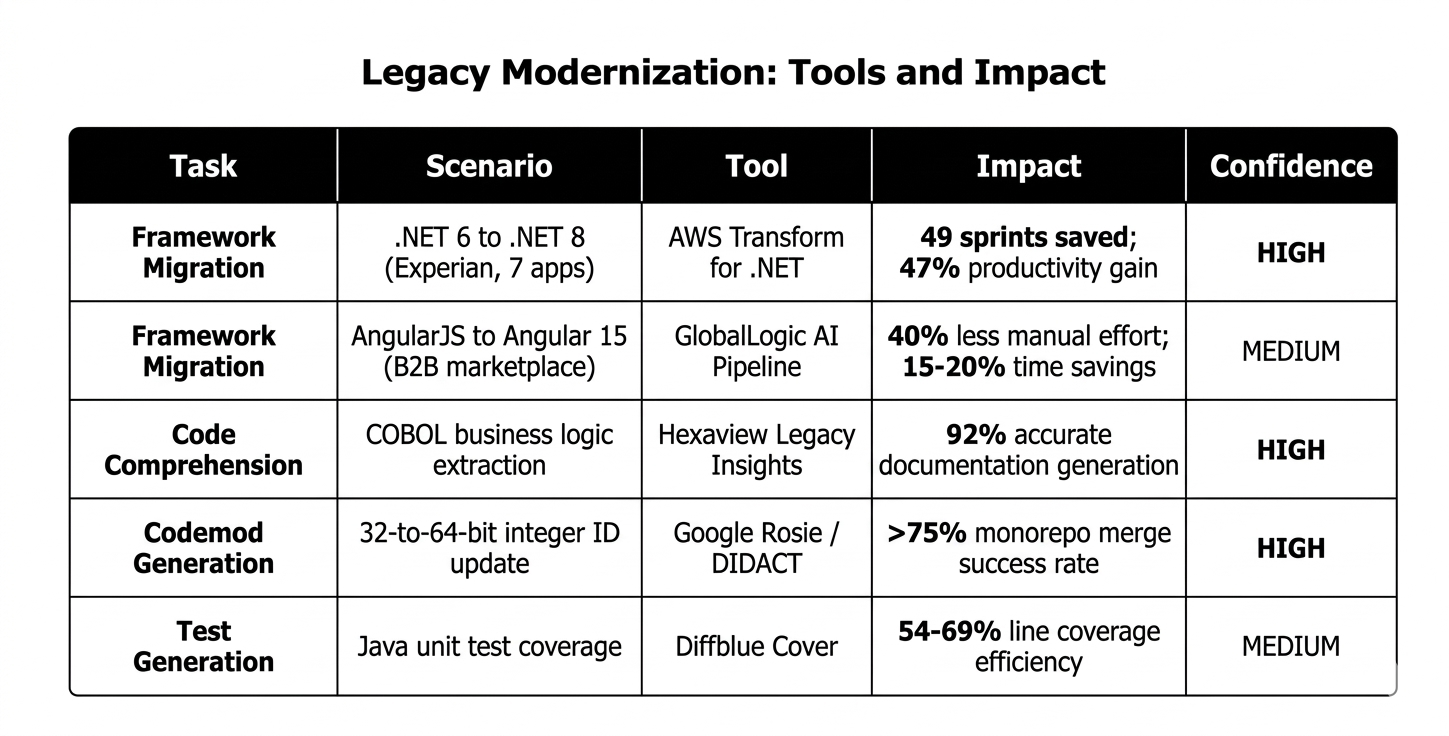

Longitudinal enterprise telemetry from GitHub's 2024-2025 update tracked actual repository metrics across randomized enterprise groups, revealing a more modest but significant 26% increase in completed tasks. Google's internal DIDACT methodology, applied to a massive 32-to-64-bit integer ID migration across their monorepo, demonstrated that 80% of code modifications were AI-authored, yielding an estimated 50% reduction in total migration time. However, this required proprietary, fine-tuned models not off-the-shelf tools.

Perhaps the most sobering finding comes from the 2025 METR study: experienced open-source developers actually worked 19% slower on complex tasks when using AI — despite subjectively perceiving a 20-24% speedup. This perception-reality disconnect underscores how benchmarks of isolated tasks overestimate AI capabilities in enterprise environments. The AI enablement approach matters – ad-hoc usage fails where systematic integration succeeds.

Migration and Refactoring: Where AI Delivers Real Value

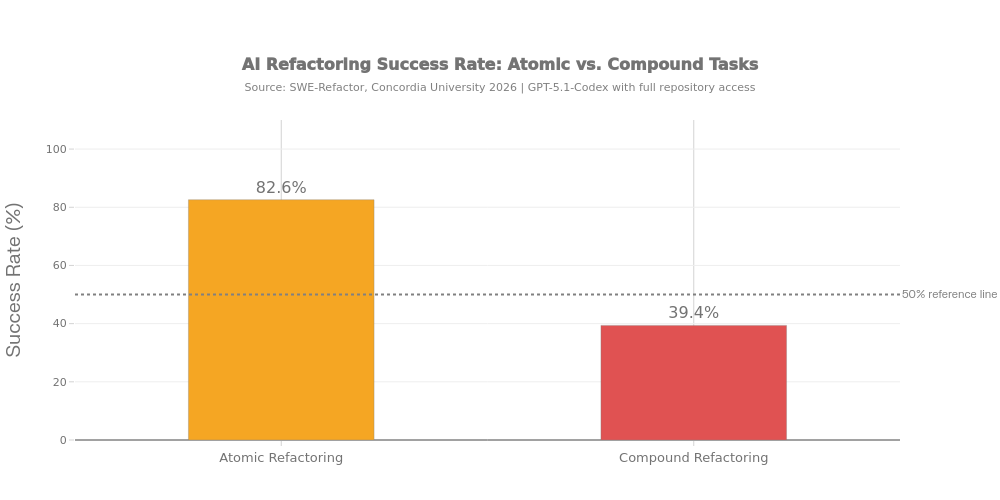

Legacy modernization represents the most economically valuable application of AI in software engineering. A 2026 case study involving Experian achieved an 80% automation rate across 687,600 lines of .NET code, reducing 7 enterprise application upgrades from 15 sprints to 8 — a rigorously measured 47% productivity gain. GlobalLogic's AngularJS-to-Angular 15 migration demonstrated 40% reduction in manual effort.

For code comprehension – the primary bottleneck where developers spend 52-70% of their time on legacy codebases – AI tools show transformative potential. The 2026 LegacyCodeBench demonstrated 92% accuracy in extracting behavioral documentation from COBOL code. This is where structured engineering practices combined with AI tooling create the greatest leverage.

Measured AI Impact on Migration Tasks

The Failure Modes: Why Human Oversight Is Non-Negotiable

Credibility demands acknowledging AI's severe limitations. AI-generated code was inherently insecure in 45% of cases when developers didn't provide explicit security instructions. Apiiro security research tracked a 10x spike in security vulnerabilities introduced via AI-generated code over six months in 2025. GitClear's longitudinal analysis revealed a 60% collapse in refactoring activity and an eightfold increase in code duplication.

The "AI Paradox," documented by GitLab's 2025/2026 Global DevSecOps report: while AI accelerates initial code drafting, organizations reported losing an average of 7 hours per team member per week to AI-related inefficiencies. Teams with high AI adoption merged 98% more pull requests, but PR review time spiked by 91% — resulting in flat DORA metrics. AI is a force multiplier for well-structured teams, and a productivity trap for undisciplined ones. This is why robust DevOps practices and governance are prerequisites, not afterthoughts.

The Honest Bottom Line: AI-augmented development delivers measurable 30-60% productivity improvements for migration and refactoring tasks — but exclusively when deployed as a systematic, governed team workflow. The key differentiator is not the AI model, but the engineering team's ability to structure context, enforce review gates, and maintain human oversight at architectural decision points.

Phased Migration vs. Big-Bang Rewrites: What the Evidence Says

The temptation to "just rewrite everything" is powerful. But decades of evidence, from Netscape's catastrophic 1997 decision to modern enterprise SaaS migrations, consistently shows that phased approaches win.

The Big-Bang Failure Rate

The Standish Group's CHAOS reports have consistently documented that large, monolithic IT projects achieve a success rate of less than 30%. Over 50% are "challenged" (over budget and behind schedule), and roughly 20% fail entirely. Joel Spolsky's landmark 2000 essay argued that throwing away code and starting from scratch is "the single worst strategic mistake any software company can make." Martin Fowler estimates rewrites typically take 2-3x longer than original optimistic estimates.

The canonical failure is Netscape. In 1997, Netscape decided to rewrite Navigator from scratch. The rewrite consumed three years during which no meaningful updates reached users. Microsoft's Internet Explorer captured the market. More recently, the UK's NHS National Programme for IT was officially dismantled in 2011 after spending over £10 billion – one of history's most expensive IT project failures.

The Strangler Fig Pattern and Frontend-First Modernization

Martin Fowler's Strangler Fig Application pattern (2004) provides the architectural blueprint: instead of replacing a system in one shot, gradually grow a new system around the old one, intercepting requests at the edge and routing them to new components as they're built. This approach has been validated at massive scale by companies like Shopify, GitHub, and Spotify.

Within the phased approach, starting with the frontend delivers the highest return on effort. The frontend layer is typically less coupled to core business logic, meaning it can be replaced with a modern React/Next.js application that consumes existing APIs through a thin adapter layer. This produces immediate visible value for end users and stakeholders – building trust and political momentum for subsequent phases.

The counter-argument, that frontend-first creates a "beautiful facade on a crumbling foundation", is valid but manageable. The key is that the frontend migration phase includes establishing a clean API layer (REST or GraphQL) that abstracts the legacy backend. Each backend module is then refactored independently behind the stable API contract, using patterns like Branch by Abstraction, Parallel Run, and Feature Flag Rollout.

The Discovery Phase: The Most Undervalued Investment

Successful phased migrations invest 4-8 weeks upfront in structured discovery before writing a single line of new code. This produces a dependency map of the existing system, extracts undiscovered business rules, identifies technical debt that should be abandoned rather than migrated, and validates architectural assumptions through proof-of-concept spikes. This investment reduces total project duration by 15-25%.

AI tools are particularly effective during discovery. Using tools like Cursor, Sourcegraph Cody, or Claude for codebase analysis, teams can index an entire monolith – identifying hidden dependencies, mapping data flow, and flagging outdated patterns. What previously took weeks of manual code archaeology can be accomplished in days. This combination of AI-accelerated analysis and human expertise is the foundation of modern AI-enabled engineering workflows.

The Combination: Phased Migration + AI = Optimal Approach

Each migration slice, converting one AngularJS module to React, wrapping one API endpoint, generating tests for one business workflow, is a well-scoped, bounded task. These are precisely the tasks where AI tools deliver their strongest gains (40-70% for codemods and test generation, 40-50% for framework conversion). A big-bang rewrite, by contrast, requires holistic system understanding that exceeds current AI context window limitations.

The teams that execute this combined strategy consistently report delivery timelines 40-50% shorter than traditional migration approaches, with smaller teams and lower total cost. To see how this methodology has been applied, explore Altimi's case studies.

Governance and Measuring Progress

Successful migration projects track: percentage of user traffic routed through the new system (the north-star metric), feature parity completion rate, error rate comparison, deployment frequency on the new stack, and developer velocity metrics. Progressive rollout strategies, starting at 1% of traffic, scaling to 5%, 25%, 50%, and finally 100%, provide natural checkpoints with real production data.

Techniques like parallel run comparison and shadow mode deployment allow teams to validate correctness at scale before committing to the cutover. Teams that combine these governance practices with robust CI/CD pipelines and infrastructure automation consistently achieve smoother transitions.

Key Takeaway: Phased, frontend-first modernization with incremental backend extraction consistently outperforms full rewrites in success rate (~85% vs. ~30%), time-to-first-value (months 2-4 vs. month 18+), and risk profile, and this advantage is amplified by 30-50% when combined with structured AI-assisted development workflows.

Sources & Citations

- [1] Gartner, 'IT Key Metrics Data: IT Spending and Staffing Report,' 2024/2025 Edition.

- [2] IDC, 'Worldwide Black Book: IT Spending Forecast,' 2025.

- [3] Forrester Research, 'The State of Technical Debt,' 2025.

- [4] StackOverflow, 'Developer Survey Results,' 2023-2025 Editions.

- [5] DORA Team (Google Cloud), 'Accelerate State of DevOps Report,' 2024.

- [6] GitHub Next, 'Research: Quantifying GitHub Copilot's Impact on Developer Productivity and Happiness,' 2022.

- [7] GitHub / Microsoft, 'Copilot Enterprise Longitudinal Telemetry Study,' 2024-2025.

- [8] Google Research, 'DIDACT: Large-Scale AI-Assisted Code Migrations,' ACM, 2024.

- [9] McKinsey & Company, 'The State of AI in 2025,' Global Survey Report, 2025.

- [10] Complexity Science Hub (CSH), 'Generative AI and Software Development Productivity,' Science, 2025.

- [11] METR, 'Measuring the Impact of AI on Experienced Open-Source Developer Productivity,' 2025.

- [12] AWS, 'Experian .NET Modernization Case Study: AWS Transform for .NET,' 2026.

- [13] GlobalLogic, 'AI-Assisted AngularJS to Angular 15 Migration: B2B Marketplace Case Study,' 2025.

- [14] Hexaview Technologies & Kalmantic AI, 'LegacyCodeBench: Evaluating AI Comprehension of COBOL,' 2026.

- [15] Diffblue, 'Automated Unit Test Generation Benchmark: Deterministic vs. LLM-Based Approaches,' 2025.

- [16] Google, 'Rosie: Large-Scale Change Infrastructure for Monorepo Migrations,' internal report cited in DIDACT, 2024.

- [17] GitLab, 'Global DevSecOps Report 2025/2026,' surveying 3,200+ professionals.

- [18] JetBrains, 'State of Developer Ecosystem 2025,' survey of 24,534 developers.

- [19] Faros AI, 'Developer Productivity in the Age of AI: 10,000 Developer Telemetry Study,' 2025.

- [20] Apiiro Security Research, 'AI-Generated Code Vulnerability Trends,' 2025.

- [21] GitClear, 'Coding on Copilot: Code Quality Analysis 2021-2024,' longitudinal study, 2025.

- [22] DX (formerly GetDX), 'Q4 2025 Developer Experience Impact Report,' telemetry from 135,000 developers.

- [23] Standish Group, 'CHAOS Report: Project Success and Failure Trends,' multiple editions (2015-2024).

- [24] Joel Spolsky, 'Things You Should Never Do, Part I,' Joel on Software, April 2000.

- [25] Martin Fowler, 'StranglerFigApplication,' martinfowler.com, 2004 (updated 2019).

- [26] Shopify Engineering, 'Deconstructing the Monolith: Designing Software that Maximizes Developer Productivity,' 2019-2023 blog series.

- [27] UK National Audit Office, 'The National Programme for IT in the NHS: An Update,' Report HC 888, 2011.

- [28] Gartner, 'Predicts 2026: AI Agents Will Transform Enterprise Application Development,' 2025.

Frequently Asked Questions

How long does a phased migration typically take?

A phased migration for a mid-size platform (50-200K lines of code) typically spans 8-14 months from discovery to substantial completion. The key difference from a big-bang rewrite is that users see improvements starting in month 2-4, not month 18. Phase 1 (discovery and frontend modernization) usually delivers visible value within 3-4 months, while backend refactoring continues incrementally in subsequent phases.

Can AI fully automate a legacy migration?

No. The 2025 METR study demonstrated that experienced developers actually worked 19% slower on complex repository tasks when relying heavily on AI, despite perceiving a 20-24% speedup. AI excels at bounded, well-scoped tasks: converting AngularJS templates to React components, generating test suites, automating syntax upgrades. But architectural decisions, business logic validation, and integration testing require human expertise. The optimal model is 'human-directed, AI-accelerated' with developers acting as directors, delegators, and validators.

What about the database layer? Should we migrate that first?

It depends on constraints. If your database version is approaching end-of-life (e.g., MongoDB 4.x), the upgrade may be a prerequisite. However, database upgrades are generally lower-risk than full application rewrites since modern databases maintain backward compatibility. The recommended approach is: upgrade the database in-place (if needed for security/support), then modernize the frontend (immediate user value), then refactor backend services to use the database more effectively.

How do you measure progress during a phased migration?

Successful migration projects track: percentage of user traffic routed through the new system, feature parity completion rate, error rate comparison (old vs. new), deployment frequency on the new stack, and developer velocity metrics (PR cycle time, time to merge). The most critical leading indicator is 'time-to-first-value' – how quickly end users experience improvements on the new platform.

What team size is needed for AI-augmented modernization?

AI-augmented workflows enable smaller teams to deliver at the pace of traditionally larger ones. A typical Phase 1 team includes: 1 Solution Architect, 1-2 Senior Frontend Developers, 1 Backend/Meteor Developer, 1 QA Engineer, and 1 Project Manager – with an AI Software Engineering Lead coordinating the tooling workflow. This is roughly 40-60% smaller than a traditional migration team, with the AI toolset handling code comprehension, test generation, and boilerplate conversion that would otherwise require additional headcount.

When is a big-bang rewrite actually appropriate?

A full rewrite can succeed under rare, specific conditions: the codebase is small (<30K LOC), the team has deep domain expertise, requirements can be frozen during the rewrite, and the old system can remain in maintenance mode indefinitely. Basecamp's successful version transitions are the canonical example – they built a new product rather than rewriting the old one, and allowed existing users to stay on the legacy version. For most enterprise platforms with active users and evolving requirements, phased migration remains the safer choice.